Since the arrival of the first generative AI, in architecture we have lived with a fairly clear separation between two families of tools. On the one hand, what we call Generative AI has helped us explore different atmospheres, typologies, materials and design languages at very high speed and with an enormous capacity for iteration. On the other hand, Generative Design has focused on optimization, design constraints, regulations and performance. Between the first image and the underlying structure there was such a huge gap that sometimes the use of AI in design was simply unviable.

“The stroke has intention, but AI lacks it.” This is a phrase I repeat many times when I talk about what it means to design something. I do not claim it as my own, but I do share the line of reasoning it leads us to. For years we have lived with a generative AI that served us, at best, to inspire what would later become the first sketches. Now the story is changing.

Has AI finally understood architectural intention? Can it glimpse the ultimate intention that sometimes the architect is not even fully aware of when drawing a line? No, clearly not. But it has learned to understand that, as we are taught at university from the very first classes, “be careful with the lines you draw, because in real life they become walls.” AI has taken a huge step forward in understanding what lies behind a design and, with that, its capabilities have changed radically.

If until now we had Generative AI tools clearly separated from Generative Design tools, in 2026 that separation is starting to become much more blurred. Autodesk already describes Neural CAD as a new category of foundation models capable of reasoning directly over CAD objects, and along that same line it places Neural CAD for buildings in its Forma app, with the ability to translate between a conceptual model of masses and layouts and the final design of a room or space.

It may not seem like much, but the evolution we are seeing changes a great deal for architects, computational designers, BIM managers and consultants. When we work in the early phases, we want speed, and when the project matures, we look for traceability, spatial coherence, compliance and performance. Autodesk is aiming precisely at that combination when it speaks about tools designed to accelerate the process while maintaining creative control and gaining real-time analysis capabilities, while also reminding us that BIM and IFC describe identity, properties, relationships, processes and systems, that is, much more than a geometric shape. Here, with this information, AI takes on a new meaning. This is where digital architecture gains a completely new depth.

Generative AI and Generative Design start from different logics

The basic difference between Generative AI and Generative Design is still something to keep in mind, because each one comes from a different way of producing results, and that is something we must be very clear about.

The former works through patterns and probability, through our own language and through large volumes of data. The latter works with objectives, constraints, parameters and the constant evaluation of alternatives according to the results we are seeking.

Autodesk sums it up very well by distinguishing AI that expands creative exploration from the Generative Design we find, for example, in Revit, which generates alternatives to our design based on goals, inputs and constraints in order to shape our decisions. One imagines possibilities; the other makes those possibilities possible.

With that difference clear, when we are in the concept phase, Generative AI offers us enormous power to open up the project’s visual field. It helps us explore a façade, the density of space, the materiality of the atmosphere, scale, or even the atmospheric experience itself almost as quickly as we can formulate an intention. The best part is that this is precisely where we do not want to apply too many constraints.

We have a tool that greatly accelerates the studio’s internal conversation, radically changes the quality of references and presentations, and turns a verbal intuition into something visible in just a few minutes. Its lack of constraints or, more precisely, its ability to propose impossible solutions is actually extremely useful here. When we as architects have spent years thinking in a certain way, AI comes along and asks us the big question: what would happen if…?

The key is that we need to understand this ideation for what it is. When what we want is for that idea to become architecture, that is when form needs rules, spatial relationships, hierarchies, layers of information, families, thermal properties, orientation, thicknesses, openings, systems, regulations and, above all, physics. That is where Generative Design has a very clear advantage, because its working framework already starts from those constraints and criteria. Architecture begins to gain substance when data enters the picture.

The case studies are, truth be told, genuinely interesting. In OMA’s case, the team used generative design to study the envelope and the stands of Feyenoord Stadium in depth and managed to add no fewer than 600 seats while maintaining the C-values they were looking for. Meanwhile, the MG AEC team focused on daylight, the window-to-wall ratio and solar potential, linking the Generative Design system in Revit with Dynamo to evaluate building performance from, so to speak, the very first sketches. When the project criteria are clear, the generative design machinery iterates with simply astonishing depth and speed.

Neural CAD takes the conversation from the prompt environment directly into the model

This is where Neural CAD comes in, probably the most interesting concept of the moment if we work in architecture, BIM or computation in the construction field. Autodesk presents it as a new class of generative technology based on neural networks, and describes Neural CAD for Geometry as a system capable of creating precise, editable CAD geometry from a text prompt and the spatial constraints already present in the design or expressed as part of the prompt.

Do we need to say it again? At the beginning we were talking about an AI that can generate ideas, ideas that in the vast majority of cases are completely disconnected from what is possible to build. Then we moved on to an AI that understands physics and structure in order to propose a solution that, so to speak, speaks the language of architects. Now we take another step forward and arrive at an AI that, taking into account the existing context or the one we present to it, can give us a valid design ready to be incorporated and edited as part of the evolution of our work in CAD.

The difference is enormous, the evolution is simply astonishing, and the doors that Neural CAD opens are practically infinite. We are talking about editable geometry, directed and with technical intent.

Neural CAD for Buildings is, according to Autodesk, the foundation model for AEC. We find it within Forma, and it allows us to move with enormous speed from a conceptual massing model to the different layouts and systems of a well-detailed building. The key here lies in the word “translate,” because the system speaks to us of continuity between decision scales that until now lived separately outside the architect’s mind: volume, floor plan, spatial organization, system. The AI we see today, however, is beginning to understand architecture as the related whole that at university we learn to see.

Shall we dare to take one step further? When we bring this into the language of BIM, the results move up a level. IFC is, as we already know, a standardized digital description of a building or environment. Its schema encodes identity and semantics, as well as attributes such as material, colour or thermal properties, and also relationships such as location and connections. Until now we have been used to the fact that, when we say “window” within a BIM environment, we are talking about an element with meaning, properties and links, not a simple void cut out of an image or the cut of a line. When this is the context Neural CAD works with, its capabilities go far beyond simply drawing lines on a screen.

Here we are at a point where the boundary between text-to-image and text-to-BIM is beginning to shift. Autodesk Research has already presented work such as TileGPT, where the system completes designs by adding orientations, windows and interior walls until it arrives at a valid proposal that can be transferred to our BIM software for later editing and analysis. And at Autodesk University, the KLH Engineers group has shown how machine learning is capable of generating walls in Revit with the correct thicknesses from simple AutoCAD plans and of placing door and window families using the logic of its algorithms. The translation from an abstract scheme to a genuinely usable model is already here.

This is where many firms are going to start seeing how AI becomes truly useful in the field of architecture. Getting the scheme or the inspirational image needed for marketing, competitions or visual exploration requires one set of tools and a team with very specific capabilities, but that is not what a practice mainly does. Meanwhile, the day-to-day reality of a project calls for something completely different: continuity with Revit, continuity with IFC, continuity with analysis, coordination and oversight.

In that field, Autodesk presented Forma Building Design in September 2025 as a cloud solution for schematic design that combines different modelling tools, generative AI and real-time analysis. It also includes connections to Forma Site Design and to Revit, making the transfer of information between the sketch and BIM extremely efficient. This is where Neural CAD makes sense. This is where, in day-to-day work, we are faced with a tool capable of greatly lightening our workload.

So much so that it actually changes our role as users of these tools quite significantly. With classic visual Generative AI, our work usually consists of writing prompts, choosing the results and guiding the aesthetic direction. With Neural CAD and the connected workflows we have just seen, our task begins to look more like defining objectives, selecting systems, evaluating variants and guiding the translation between formats or information systems. We are still designing, of course, but we increasingly design through decisions, rules and hierarchies.

Autodesk Forma, Revit and the architect’s new role

The architect’s role changes with these tools. The degree of change depends on our usual way of working and on the type of projects under our responsibility, but the change is real. Where it is perhaps most noticeable is in Autodesk Forma.

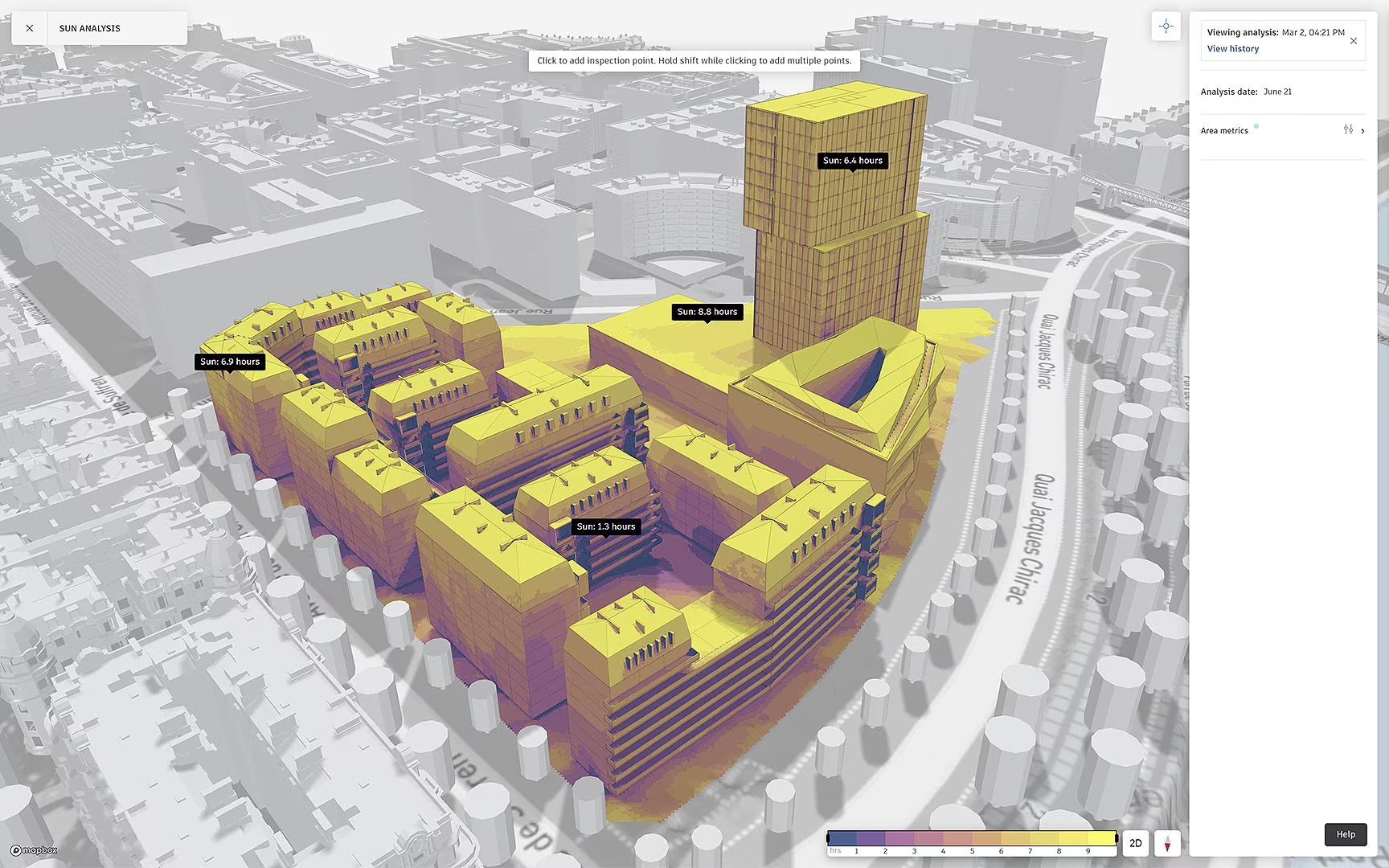

Autodesk presents Forma Site Design as an environment with real-time AI analysis of noise, wind, embodied carbon, daylight potential, microclimate and solar energy. Forma allows us to carry out predictive analysis for wind and noise in real time, with the aim of supporting different design decisions from the earliest stages. This approach goes much deeper than it may seem. It means that analysis stops being a later checkpoint and becomes an ongoing conversation about geometry.

Until very recently, when we talked about AI and generative design in architecture, we thought of lighter and more stylized forms, of optimizing certain structural components, or of managing to create components using less material. In this field AI is still a great help, of course, but now it is beginning to carry more weight in reasoning at the level of the complete system.

We are looking at models capable of operating directly at building systems level, reasoning about HVAC, lighting and more. We are looking at AI-assisted workflows that can design a wall assembly with sustainable materials, translating our assembly sketches into representations that capture components and relationships, and then assessing the material options and aligning them with project goals and market options.

Here AI acts as support for decisions on constructability, environmental performance and technical selection, precisely in an area where, as architects, we have to retain control over the criteria.

All of this leads us to a fundamental question: authorship. In a classic workflow, drawing a line was equivalent to fixing a decision. In these new environments, our role often consists of framing the challenge, selecting the variables, accepting or rejecting the suggested solutions, and guiding the system in a specific direction. Of course we have creative control, and everything AI produces will be editable as well as aligned with our intention, but authorship shifts from the isolated stroke to the direction of the system as a whole.

The ethical dimension appears right here. Because the more the level of autonomy of these tools rises, the more important transparency, data traceability, intellectual property protection and responsibility for the built result become. In this context, Autodesk articulates its Trusted AI Practices around responsibility, transparency, reliability and security, and that is the foundation we rely on when we work with its tools.

From here on, the future that is beginning to take shape for architecture is simply fascinating. It looks far less like a replacement of the architect and much more like an expansion of our capabilities. AI automates repetitive tasks, offers us predictive insights and places us in a supervisory position when a more manual intervention is not required.

We are on the verge of working with Co-Pilot systems that review compliance, performance and coordination while we design. A logical journey from the first text-to-image we started from, leading to text-to-BIM, our second stop, and far beyond. Logical, yes, but no less surprising for that, and a banner of a paradigm shift in how architecture is practised.

All things considered, Generative AI and Generative Design stop being rivals and begin to function as evolutionary parts of the same ecosystem. The former remains excellent for opening up possibilities, language and formal direction. The latter continues to provide structure, performance and verification. And drawing on both, Neural CAD is creating a completely new territory in which an idea can gain semantics, systems and analysis from the earliest stages. For those of us who work in architecture, this means that we can devote much more time to the more artistic aspects of architecture, knowing that the ecosystem will accompany us from the first sketches and make the rest of the process easier.