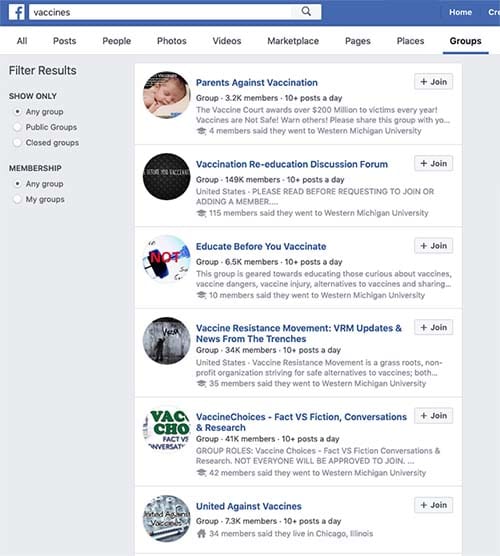

Facebook and WhatsApp have been slow to react to the fake news crises that have spread across both networks. Recently, however, it has been encouraging to see WhatsApp begin kicking up operations to try and smother fake news. Limiting the amount of people users can forward messages to slows the wholesale spread of untrustworthy articles and labeling messages with the number of times they’ve been forwarded gives users more information about the messages they’re receiving.

A report by Reuters, however, shows that, quite rightly, the WhatsApp team are going even further in a bid to stamp out the spread of dangerous fake news across the network.

WhatsApp has launched a new fact-checking service for its Indian users

Reuters reports that WhatsApp has set up a new fact-checking line in India. The news comes at a crucial time as Indians are going into national elections later this month. With a population of well over a billion people, the national elections in India are the biggest democratic exercise on the planet, so it is clear to see why WhatsApp appears to be beefing up its fake news fighting operation in the build-up to such an important event. It is estimated that WhatsApp has well over 200 million users in India.

This new move sees WhatsApp teaming up with a local startup called Proto. According to a WhatsApp statement, users will be able to share messages with Proto so that the startup can verify their authenticity. With a user base of 200 million users in the grip of a fake news crisis, this is quite the remit for a local startup. In fact, since the WhatsApp statement announcing the partnership earlier this week, it has since come out that it is indeed too large a task for the Proto team to carry out.

How to Spot Fake News on WhatsApp and Facebook

Click here to learn howA subsequently released FAQ page on the Proto website explains that not all users will receive a response if they send messages to the startup. The page says, “The Checkpoint tipline is primarily used to gather data for research and is not a helpline that will be able to provide a response to every user. The information provided by users helps us understand potential misinformation in a particular message, and when possible, we will send back a message to users.”

The Proto partnership is more about learning about fake news than preventing its spread in the immediate-to-short term.

The FAQ page goes on to say, “We would like to verify every rumor, but we know that will not be possible given the diversity of information we will receive and the limitations of any verification research.” When both Reuters and Buzzfeed ran tests on the service, they confirmed that not all messages will get a response from the Proto team. The Reuters team had no response after two hours of waiting and the Buzzfeed staff reported no responses to messages even after a 24-hour period.

This new effort from WhatsApp has to be applauded, but again it seems like too little too late. If this method of collecting data proves efficient, the WhatsApp team will be able to learn a lot about the types of fake news stories people are sending via the messaging app. Unfortunately, this project will do little-to-nothing to prevent the spread of fake news during the campaigning season for the Indian national elections. As one TED research fellow who specializes in misinformation told Buzzfeed, “This should have happened years ago.

10 Tricks for spotting fake news

Read Now ►If you receive a message you believe to be fake, you can send it to the new fake news tipline by clicking here or sending it via WhatsApp to +91- 9643-000-888. You might not receive an answer on your particular message but you will help the long term battle against the dangerous spread of misinformation.