As toxic as Facebook can be for us, it is even worse for Facebook moderators.

We first heard about the lives of Facebook’s moderators last year when Motherboard published an inside look at the company’s content moderation process, policies, and challenges.

Instead of enjoying all of the tech company perks from cereal bars to craft beer and foosball, content moderators see high instances of drug abuse, PTSD, and anxiety disorders.

Moderators often work as third-party contractors in a low-pay, high-stress environment. These people see the absolute worst sides of humanity from blood, guts, and gore to hate crimes and abuse.

Unfortunately, it doesn’t seem like much has changed since August 2018. Facebook still treats moderators like second-class citizens. Worker safety still falls by the wayside.

A stark inequality problem

A couple of months back, The Verge’s Casey Newton wrote an investigative piece of his own. He found another set of disturbing revelations about Facebook and its content moderators.

The piece primarily covered issues facing the moderation staff like exposure to violent content and conspiracy theories. However, the report also highlighted the vast difference in the work environment and compensation between typical Facebook workers and content moderators.

The average Facebook employee earns about $240,000 per year. The average person working in the company’s Phoenix-based moderation center earns $28,000.

Facebook’s latest campus, designed by architect Frank Gehry, boasts its own redwood forest and plenty of green space. It is ideal for decompressing after a stressful day on the job. Content moderators don’t have that luxury.

The psychological impact of violent content

According to Psychology Today, violent content from TV news can increase PTSD, anxiety, and depression. Reportedly, watching the news cycle after a mass tragedy can increase your chances of developing something called vicarious traumatization.

Facebook Moderators, by contrast, aren’t seeing humanity’s worst events through the filter of the cable news station. They’re on the front lines. So as one might imagine, the impact of these videos is likely much more significant.

The Verge report describes one instance of a woman named “Chloe” who is asked to moderate a Facebook post in front of a group of trainees.

The post in question depicts a man being stabbed to death while begging for his life. Chloe’s job in this scenario is to tell the group whether the post should be removed. Most people never have to see this type of thing, much less calmly describe the scenario to co-workers during routine training.

The recommended remedy for this vicarious traumatization is to step away from disturbing content, take a break from the news, and try to do something positive. Valid, sure, but when it’s your job to look out for this stuff, there’s no real escape. That’s a tremendous amount of stress to carry around for $28k a year.

It’s clear that Facebook knows the value of creating positive workspaces for their employees — again, see the redwood forest as a point of reference. However, it does not look like they are doing enough for their moderators.

Conspiracy theories spread

The effects of graphic content can have a huge impact on the well-being of the people who work inside the moderation centers. However, Newton found another disturbing trend that has some wider negative implications for society.

Apparently, reviewing conspiracy theory posts spreads the information contagion. Basically, those flat-earthers might be doing even more harm than they realize.

The article mentions a moderator who says he “no longer believes 9/11 was a terrorist attack.” Another walks the floor talking about the flat earth theory.

Who should deal with content moderation, anyway?

Moderators are taking action. Two former moderators have joined a lawsuit against the company, citing that symptoms of PTSD were brought on by reviewing violent images on the job.

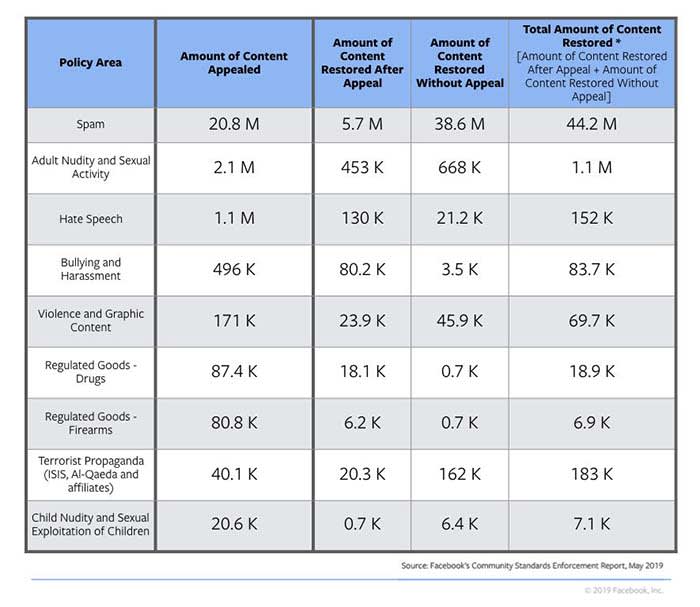

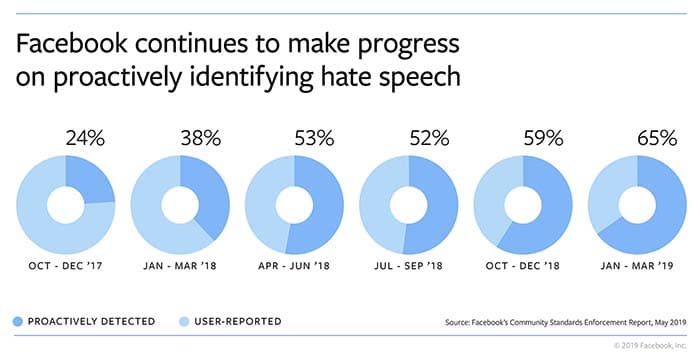

As it stands, Facebook says that about 38% of the hate speech they detect is done using AI. The company has plans to improve its AI moderation effort, but there’s a long way to go. Still, there needs to be a human moderator to make sure nothing heinous slips through the cracks.

Unfortunately, protecting the rest of us from Facebook’s horrors comes at the expense of a safe workplace and a healthy mind.